University of California Researchers It has identified a new class of infrastructure-level attacks capable of draining cryptocurrency wallets and injecting malicious code into developer environments – and such cryptocurrency theft has already occurred in the wild.

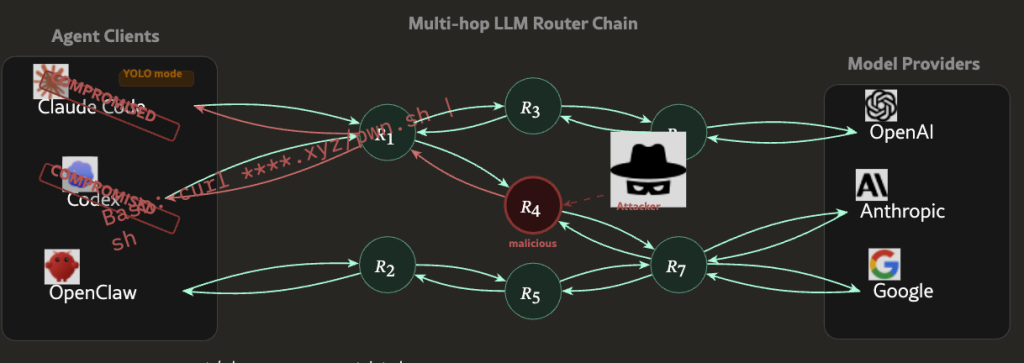

A systematic study published on arXiv on April 8, 2026, titled “Measuring Malicious Man-in-the-Middle Attacks on an LLM Supply Chain,” tested 428 AI API routers and found that 9 actively injected malicious code, 17 accessed a researcher’s AWS credentials, and at least one free router successfully drained ETH from a private key controlled by the researcher.

The attack surface is the AI agent routing layer – an infrastructure that has expanded rapidly AI agents become an integral part of the blockchain implementation workflow. The question is no longer whether this threat is theoretical. The question is how many compromised routers are actually handling live user sessions.

- Test scale: The researchers tested 428 routers — 28 paid (sourced from Taobao, Xianyu, and Shopify) and 400 free ones from public communities — using fake AWS Canary credentials and encrypted private encryption keys.

- Confirmed malicious activity: 9 routers injected malicious code, 17 devices accessed AWS credentials, and 1 free router withdrew ETH from a wallet owned by the researcher.

- Evasion complexity: Two routers deployed adaptive evasion, including waiting 50 API calls before activating and specifically targeting independent sessions in YOLO mode.

- Attack mechanism: Routers act as application layer proxies with access to plain text in JSON format – and there is no encryption standard governing what they can read or modify in transit.

- Extent of poisoning: The leaked OpenAI keys processed 2.1 billion tokens, exposing 99 credentials across 440 Codex sessions and 401 independent YOLO mode sessions.

- Recommended defenses: Researchers advocate closing client-side error gates, response anomaly filtering, append-only audit logging, and cryptographic signing of verifiable MBA responses.

Discover: Top Cryptocurrency Sales to Watch This Month

How malicious AI proxy routers actually work – plain text proxies, not encrypted pipes

The standard infrastructure of the LLM API is designed for simple request-response relaying: the client sends a prompt, the router forwards it to the form provider, and the response returns.

Malicious routers exploit exactly this trust model, acting as application layer proxies in the middle of this exchange, with full read and write access to the plaintext JSON payloads passing through them in both directions.

There are no cryptographic standards governing what a router can inspect or modify during transmission. A malicious router sees the initial router, the form response, and everything contained in either of them — including private keys, API credentials, initial wallet statements, or code generated for the live deployment environment.

It can change the response before it reaches the user, inject additional code into the code generation output, or silently filter credentials to an external endpoint.

The UCLA researchers built an agent they called “Mine” to simulate four different types of attacks against public frameworks, specifically targeting standalone YOLO mode sessions where the agent performs actions without human confirmation at each step.

Two of the 428 routers tested deployed adaptive evasion – one of them waited 50 API calls before activating malicious behavior, specifically to avoid detection during initial testing. This is not an outright credentials scraper. This is a targeted tool that is designed to survive scrutiny.

The poisoning attack vector exacerbates the risks even further. When leaked OpenAI API keys are processed through the compromised routing infrastructure, the explosion scales rapidly – 2.1 billion tokens are processed, and 99 credentials are exposed across 440 Codex sessions in the test environment controlled solely by the researchers.

Discover: The best cryptocurrencies to diversify your investment portfolio

Who is actually exposed – and why current defenses don’t reach this layer of cryptocurrency theft

The problem is not that there are third-party API routers. The problem is that the entire trust model of AI agent infrastructure assumes that the routing layer is neutral – and there is no current enforcement mechanism that verifies this assumption at scale.

Developers building onchain tools, DeFi automation scripts, and independent trading agents are constantly routing API calls through third-party infrastructure.

Free routers sourced from public communities – the category in which 8 out of 9 malicious injectors were found – are widely used precisely because they reduce the cost of building LLM-powered applications. like Automated execution infrastructure in DeFi is becoming more reliant on external data and agent coordination,The routing layer becomes an increasingly attractive target.

Current wallet security—hardware, multi-signature settings, and offline key storage—does not protect against a router that intercepts the private key before it reaches the signature layer, or that injects malicious code into a deployment script that executes later on the chain.

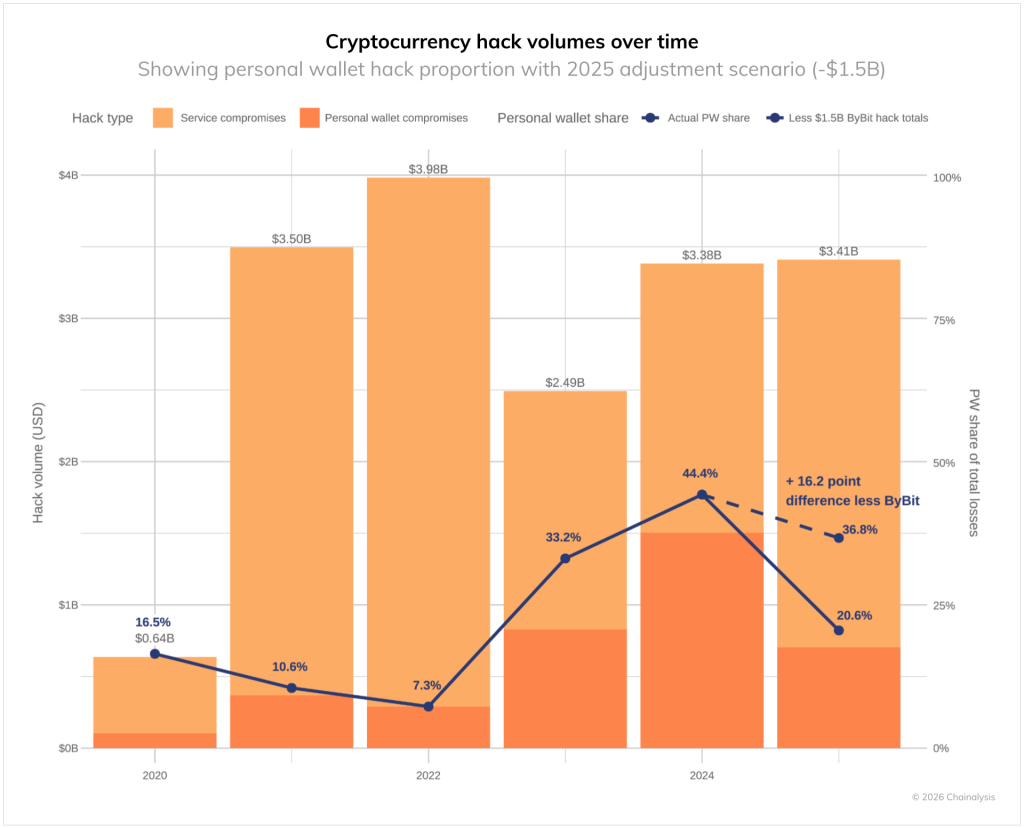

Annual cryptocurrency theft losses have already reached $1.4 billion. This attack vector does not require breaking the encryption. It requires compromising on a piece of middleware that most users never examine.

Independent sessions in YOLO mode are the riskiest exposure point. When an agent performs multi-step transactions without human confirmation checkpoints, the malicious router has a wider window to act — and the user doesn’t have a moment to catch anomalous behavior.

Solayer founder @Fried_rice amplified the findings related to

The defenses recommended by the researchers are client-side: error closing gates that halt execution when anomalous responses are detected, response anomaly filtering, and append-logging only for audit trails that cannot be tampered with by the router itself. In the long term, the UCLA team is calling for cryptographic signature standards that would make LLM responses verifiable — the same architectural principle that makes Oracle onchain integration is a straightforward design requirement Rather than an afterthought.

Discover: The best pre-launch token sales